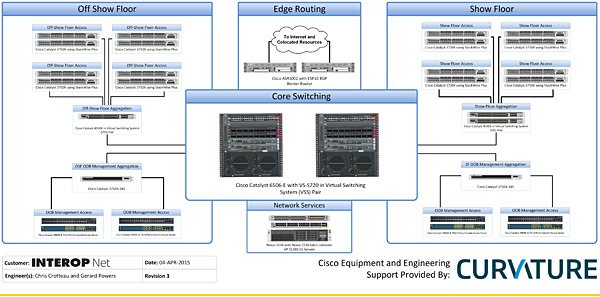

This year at Interop, Curvature has provided a majority of the wired infrastructure for both the show floor and the conference space. Here’s an overview of what we brought and why we chose these devices.

Internet Edge

At the internet edge, Curvature has brought a pair of ASR 1002 routers. Since the external connections are 1Gbps and a 10Gbps connection to the core is required, a high performance router is needed. The ASR 1002 is generally used in routing environments where throughput of 1-10Gbps is needed, making it a good fit for this application. Additionally, to ensure the highest availability, multiple WAN connections are brought in and BGP is used to direct traffic to the Internet via the best connection.

Core Switching

For core switching, we have brought a pair of Catalyst 6506-E switches using the VS-S720-10G-3C supervisor. To simplify the network topology, we chose to run these switches in VSS mode, turning both units into one large virtual chassis. This gives us the ability to do multi-chassis link aggregation, taking several Ethernet ports and making them into a single logical interface, then terminating the other end of that connection in both switches. Without VSS mode, we would not be able to use this particular feature – instead, we would only be able to have a single uplink active at any one time. Even though Interop is a demanding networking environment, looking at traffic information from past shows indicated that the older VS-S720 and associated 10GbE linecards would easily handle the traffic volume of the show – current production hardware was just not needed here.

Access Aggregation

Our access layer switches are being aggregated via a pair of Catalyst 4500-X switches. Like the 6500, we have chosen to use these switches in VSS mode, simplifying our network topology and providing a high level of fault tolerance. Due to space constraints, a 1RU switch was needed for this application, and performance needs dictated the use of 10GbE ports when possible, making the 4500-X an easy fit for this application.

Access Layer

At the access layer, we’ve brought Catalyst 3750X switches. With gigabit Ethernet, PoE+ support, dual power supplies, and options for gigabit or 10 gigabit uplinks, these switches provide all the features needed for end users at the show. Additionally, since these switches have been in production for many years, all of its features are well-documented and the software has very few bugs remaining – very important to ensuring reliable service delivery to the Interop show floor.

Network Services

Finally, to provide a platform for network services, we’ve brought a pair of HP’s DL380 Gen6 servers. While a few generations back, these platforms still support 128GB+ of memory, a pair of 6 core CPUs, and the ability to be upgraded with a 10GbE NIC, which will easily handle the number of virtual machines other vendors will be running on the InteropNet. Connectivity is provided by a Nexus 5548 switch, and for other equipment in the network services area that only has gigabit ports, a Nexus 2248 fabric extender is attached to the 5548.

If you liked what you read in this blog, you might also enjoy:

http://www.curvature.com/blog/10-Things-You-May-Not-Know-About-Servers-and-Storage